What is Natural Language Processing? How NLP powers AI systems to understand human communication, automate text workflows, improve interactions, and drive enterprise efficiency.

Natural Language Processing (NLP) has quietly become the nervous system of modern AI products. Any time a user types, speaks, searches, or asks a question, there is almost always an NLP layer interpreting that intent and translating it into something a machine can reason about.

The scale of this shift is not theoretical. The global Natural Language Processing (NLP) market is projected to grow from 18.9 billion USD in 2023 to 68.1 billion USD by 2028, with a compound annual growth rate of over 29%. At the same time, surveys indicate that a growing majority of businesses are already using NLP powered tools for chatbots, customer support, document processing, and search.

AI is not just adding features. It is compressing work. Multiple studies show that AI assisted workflows can increase productivity by up to 40% for certain knowledge tasks, particularly when employees are trained to use these systems effectively. NLP is the logic that sits between humans and these AI systems, turning messy language into structured signals and then back into fluent responses.

“The hardest part of intelligence is understanding.”

— Fei-Fei Li, CEO of World Labs

In this guide, we will walk through Natural Language Processing (NLP) from first principles to modern practice, explain how NLP powers everything from search boxes to copilots, and show how Tericsoft uses NLP and LLMs to build enterprise grade AI solutions.

Introduction: Why Natural Language Processing (NLP) Is Everywhere Today

Natural Language Processing (NLP) is the invisible engine behind many everyday interactions.

Scenario 1: Refund status in a few keystrokes

You type “refund status?” into a support portal. Under the hood:

- NLP parses your Natural Language Processing (NLP) query.

- Classifies it as a refund related intent.

- Extracts entities like order ID or dates if present.

- Routes the request to the right system.

- Generates a human like response summarizing the status.

To you, it feels like a simple chat. Underneath, an NLP pipeline has transformed language into structured actions.

Scenario 2: Voice assistants and conversational interfaces

You ask Alexa, or any voice assistant, “Play relaxing jazz for an hour.”

Natural Language Processing (NLP) pipelines:

- Convert speech to text.

- Use Natural Language Understanding (NLU) to interpret “relaxing jazz” and “for an hour.”

- Map this to playlists, timers, and device controls.

- Use Natural Language Generation (NLG) to respond politely and confirm.

A useful analogy is to think of a human conversation:

- Your thoughts and interpretation of what someone said are like NLU.

- Your spoken response is like NLG.

In the same way, Natural Language Processing (NLP) gives machines a bridge between human communication and machine logic, which is why NLP has become a foundational skill for any AI first product or platform.

What Is Natural Language Processing (NLP)?

At its simplest, Natural Language Processing (NLP) is the field of AI that helps computers understand, interpret, and generate human language.

A more technical definition:

Natural Language Processing (NLP) combines linguistics, machine learning, and deep learning to map unstructured text and speech into structured representations that models can analyze, and then convert model outputs back into natural language.

NLP acts as the bridge between how humans express themselves and how machines represent information. It draws from:

- Linguistics for syntax, semantics, and grammar.

- Machine learning for pattern recognition.

- Deep learning for high capacity representation and reasoning.

Within Natural Language Processing (NLP), it is helpful to distinguish between three closely related ideas.

Natural Language Understanding (NLU)

Natural Language Understanding (NLU) focuses on what the text or speech actually means.

Typical NLU tasks include:

- Intent classification

- Entity extraction

- Semantic similarity

- Coreference resolution

- Emotion or sentiment analysis

In an email support system using NLU, “I am really disappointed with the last shipment” would be recognized as a complaint with negative sentiment about a recent order.

Natural Language Generation (NLG)

Natural Language Generation (NLG) handles the production side: generating human like text based on some structured input or internal representation.

Common NLG applications include:

- Email or reply drafting

- Report summarization

- Chatbot responses

- Product description generation

When an LLM writes a concise, empathetic reply to that same complaint, NLG is the stage that turns the internal reasoning into coherent language.

Natural Language Processing (NLP) systems typically combine NLU and NLG, with additional reasoning layers in between.

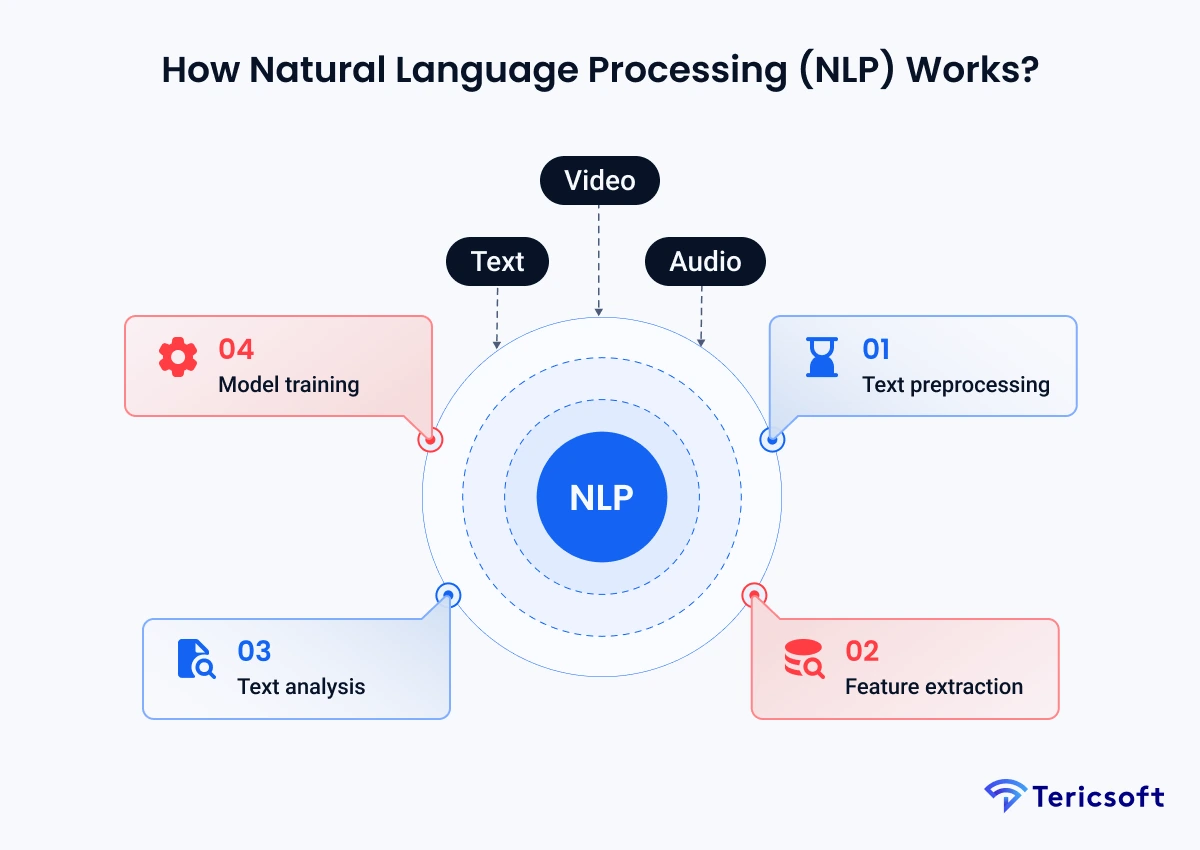

How Natural Language Processing (NLP) Works?

To make Natural Language Processing (NLP) concrete, it helps to think of it as a pipeline. At a high level, most NLP systems move through these stages:

- Text preprocessing

- Feature extraction

- Text analysis

- Model training and inference

You can map this to how humans think:

- We listen, clean up what we heard in our mind, and focus on key words (preprocessing).

- We form internal representations of meaning (feature extraction and analysis).

- We match those patterns to past experience (model reasoning).

- Then we respond.

Text preprocessing

Text preprocessing prepares raw language for further analysis. In Natural Language Processing (NLP), this stage helps make data consistent and easier to model.

Key steps:

- Tokenization: splitting text into words, subwords, or characters.

- Normalization: lowercasing, removing punctuation or special characters as needed.

- Lemmatization or stemming: reducing words to their base forms (running → run).

- Stopword handling: optionally removing very common words that add little meaning in some tasks.

Example with the query:

“What’s the weather in Dubai?”

Text preprocessing in an NLP pipeline might convert this into tokens like:["what", "is", "the", "weather", "in", "dubai", "?"] or subword pieces depending on the tokenizer.

Feature extraction

Before neural models became dominant, Natural Language Processing (NLP) relied heavily on feature extraction to convert text into numerical vectors.

Classic techniques include:

- Bag of Words: representing documents by word counts.

- TF IDF: weighting words by how unique they are across documents.

With deep learning, more expressive representations emerged:

- Word embeddings such as Word2Vec, GloVe, and FastText, which map each word into a dense vector so that similar words are close together in vector space.

- Transformer embeddings from models like BERT or other modern encoders that capture context dependent meaning, not just isolated word identities.

In modern NLP, feature extraction is often handled implicitly by the neural architecture, but the core idea is unchanged: turn language into numbers that preserve meaning.

Text analysis

Once text is numerically represented, Natural Language Processing (NLP) applies various text analysis tasks, such as:

- Named Entity Recognition (NER) to detect people, locations, organizations, and other entities.

- Part of Speech (POS) tagging to label nouns, verbs, adjectives, etc.

- Sentiment analysis to detect polarity: positive, negative, neutral.

- Topic modeling to find latent themes in large document sets.

For example, an NLP pipeline for a bank might run NER and sentiment analysis on complaints to detect which products, branches, or regions are most frequently associated with dissatisfaction.

Model training

In the model training stage, Natural Language Processing (NLP) uses statistical or neural models to learn patterns from data.

Historically, this involved:

- Naive Bayes, SVMs, Logistic Regression for classification.

- Hidden Markov Models and CRFs for sequence tagging.

Deep learning then introduced:

- CNNs for sentence classification.

- RNNs and LSTMs for sequence modeling.

- Transformers, which have now become the default architecture, achieving state of the art performance on many NLP benchmarks.

Transformers significantly reduced the need for manual feature engineering, since they learn powerful contextual representations directly from large corpora.

NLP Model: The Architectures Behind Modern Language Systems

The phrase NLP model covers a spectrum of architectures. Each generation solved some challenges and exposed new ones.

Convolutional Neural Network

Convolutional Neural Networks (CNNs) in the context of an NLP model were popular for tasks like sentence classification and text categorization. They scan windows of text and learn local patterns, such as phrases or n-grams that correlate with sentiment or intent.

CNN based NLP models are:

- Efficient

- Good for shorter texts

- Limited in modeling long range dependencies

Recurrent Neural Network

The Recurrent Neural Network (RNN) family, including LSTMs and GRUs, treats text as a sequence. This made RNN based NLP models powerful for:

- Language modeling

- Machine translation

- Sequence tagging

However, RNNs struggle with:

- Long sequences

- Parallelization across tokens

- Capturing very long range context reliably

Autoencoders

Autoencoders in an NLP model compress text into a lower dimensional latent representation and then reconstruct it. They are used for:

- Dimensionality reduction

- Anomaly detection

- Representation learning

For example, an autoencoder trained on normal support ticket descriptions could flag unusual patterns when reconstruction error is high.

Transformers

Although not explicitly listed in your H3 keywords, no modern NLP model discussion is complete without Transformers.

Transformers rely on self attention, which lets the model weight the importance of every token relative to every other token in a sequence. This led to major gains in translation quality and other NLP tasks, while enabling much more parallel training compared to RNNs.

Transformers paved the way for:

- Pretrained language models like BERT

- Large Language Models (LLMs) like GPT, Llama, Claude, and others

- Multimodal models that handle text, image, and beyond

Modern Natural Language Processing (NLP) at scale is almost always Transformer based.

Benefits of NLP: Why Enterprises Depend On It

The benefits of NLP are both tactical and strategic.

Natural Language Processing (NLP) enables enterprises to:

- Improve customer experience

- NLP powered chatbots and virtual assistants provide around the clock support for routine queries, elevating satisfaction and reducing wait times.

- Automate workflows

- Email triage, ticket classification, document routing, and information extraction all reduce manual overhead.

- Handle multilingual communication

- NLP models can translate, normalize, and analyze language across regions, which is vital for global products.

- Strengthen compliance and governance

- NLP can scan contracts, policies, and communications for risky terms or regulatory misalignment.

- Increase operational efficiency

- AI and NLP driven automation can lift productivity significantly, sometimes in the range of 14-40% when properly adopted and embedded into employee workflows.

For many organizations, these benefits form the foundation on which Enterprise LLM adoption is built.

NLP Example: Real Scenarios Explained Simply

To make the concept of an NLP example more tangible, consider a few distinct use cases.

1. Email sentiment classification

A customer success team receives thousands of emails per week. An NLP model:

- Detects sentiment

- Flags urgent or negative messages

- Suggests response templates

This Natural Language Processing (NLP) system turns a chaotic inbox into a prioritized queue.

2. Chatbot understanding and response generation

A support chatbot:

- Uses NLU to understand intent and entities

- Uses RAG or internal search to retrieve relevant knowledge

- Uses NLG to generate a helpful answer

Here, Natural Language Processing (NLP) is the backbone that moves from “What did the user say?” to “What should we say back?”

3. Fraud detection using text patterns

In finance or e commerce, descriptions, notes, and justifications contain signals. NLP models can learn patterns in language associated with fraudulent claims and route suspicious cases for review.

4. Healthcare clinical notes

In healthcare, Natural Language Processing (NLP) can extract diagnoses, medications, and risk factors from unstructured clinical notes, helping downstream models for risk prediction, coding, or research.

5. Search relevance in e commerce

Search relevance is an excellent NLP example. Users type vague, shorthand, or even misspelled queries. NLP models:

- Normalize queries

- Understand intent and attributes

- Rank products by semantic relevance

Without Natural Language Processing (NLP), search would feel brittle.

Challenges of NLP: What Makes Language So Hard?

The challenges of NLP are what make the field so interesting.

Some recurring difficulties:

- Ambiguity

- “Can you book the table near the window?” might refer to a restaurant or a database table, depending on context.

- Sarcasm and idioms

- “Great, another outage” could be negative despite the ostensibly positive word.

- Multilingual and code switching

- Users mix languages or dialects, pushing Natural Language Processing (NLP) models beyond standard corpora.

- Domain adaptation

- Models trained on general web text can misinterpret specialized legal, medical, or financial language.

- Bias and fairness

- If training data encodes bias, NLP models often replicate or even amplify it.

- Data scarcity in niche domains

- High quality labeled data is often limited, especially in regulated industries.

These challenges are partly why the shift from classic NLP models to LLMs, hybrid retrieval, and continuous learning is so important.

The Future of NLP: Beyond Models to Intelligence

The future of NLP is not just about slightly better benchmarks. It is about moving from language processing to language grounded intelligence.

Key directions:

- Large Language Models (LLMs)

- LLMs extend classical NLP by offering powerful few shot learning, reasoning, and generation capabilities across tasks.

- Multimodal models

- Models that combine text with images, audio, or even structured data unlock richer understanding of user context.

- Hybrid NLP plus knowledge graphs

- Combining Natural Language Processing (NLP) with explicit knowledge graphs improves factuality and interpretability.

- Contextual retrieval

- RAG architectures bring in domain specific data at inference time, bridging the gap between general models and private corpora.

- Agentic systems

- NLP pipelines are evolving into agents that can reason, take actions, call tools, and collaborate with humans over long horizons.

- Continuous learning and Liquid LLM

- Instead of retraining models in large, infrequent batches, enterprises are exploring systems that learn from interactions, corrections, and outcomes in a more fluid way.

For a deeper dive into continuous learning and Liquid LLM style architectures, see Tericsoft’s perspective here:

“Understanding natural language is one of the key challenges that deep learning seeks to address.”

— Yoshua Bengio, Professor at Université de Montréal

How Tericsoft Builds NLP and Enterprise LLM Solutions

At Tericsoft, Natural Language Processing (NLP) is not an isolated discipline. It is the connective tissue that ties together LLMs, retrieval systems, and agentic workflows.

Tericsoft builds end to end NLP and LLM driven applications for enterprises across SaaS, FinTech, HealthTech, Retail, and EdTech. Our work spans:

- Custom NLP model development

- Classification, summarization, NER, entity linking, semantic search, and topic modeling.

- Enterprise grade LLM integrations

- Using models such as GPT, Claude, Llama, Mistral, Cohere, DeepSeek and others, depending on data sensitivity, cost, and deployment constraints.

- RAG powered contextual retrieval systems

- Connecting Natural Language Processing (NLP) front ends with internal knowledge bases, policies, contracts, and logs.

- Document intelligence pipelines

- Combining OCR, NLP, and LLM reasoning to process forms, contracts, medical records, and financial statements.

- AI powered MVP development

- Rapidly delivering NLP and LLM centric products using internal frameworks and Super Engineer style automation.

- Model fine tuning and evaluation

- Using industry standard tools such as LangChain, LlamaIndex, and evaluation frameworks to ensure stability, groundedness, and fairness.

Our approach bridges classical NLP and modern LLM architectures, helping enterprises:

- Unlock insights from unstructured text.

- Automate complex language heavy workflows.

- Build intelligent copilots and agents.

- Operate private, secure, compliant AI systems.

Natural Language Processing (NLP) started as a way to make computers read text. It has become the foundation of how organizations listen, think, and respond at scale. As LLMs, retrieval, and agentic systems mature, NLP will continue to evolve from pattern recognition into context aware intelligence.

For enterprises that want to lead, not follow, the question is no longer “Should we use NLP?” but “How do we design the right NLP and LLM stack that reflects our domain, our customers, and our future?”

That is the kind of problem Tericsoft exists to solve.

NLP turns human text or speech into structured data machines can use, enabling intent detection, entity extraction, and natural replies.

NLP breaks text into tokens, cleans it, converts it into vectors, and uses models like transformers to understand context and generate output.

Core parts include tokenization, embeddings, NLU, NLG, transformer models, training data, and pipelines for deployment.

NLP powers chatbots, voice assistants, search, document automation, copilots, summarizers, and AI tools that understand human language.

NLP struggles with sarcasm, ambiguity, domain shifts, bias, multilingual text, data needs, and ensuring accurate real-world context.